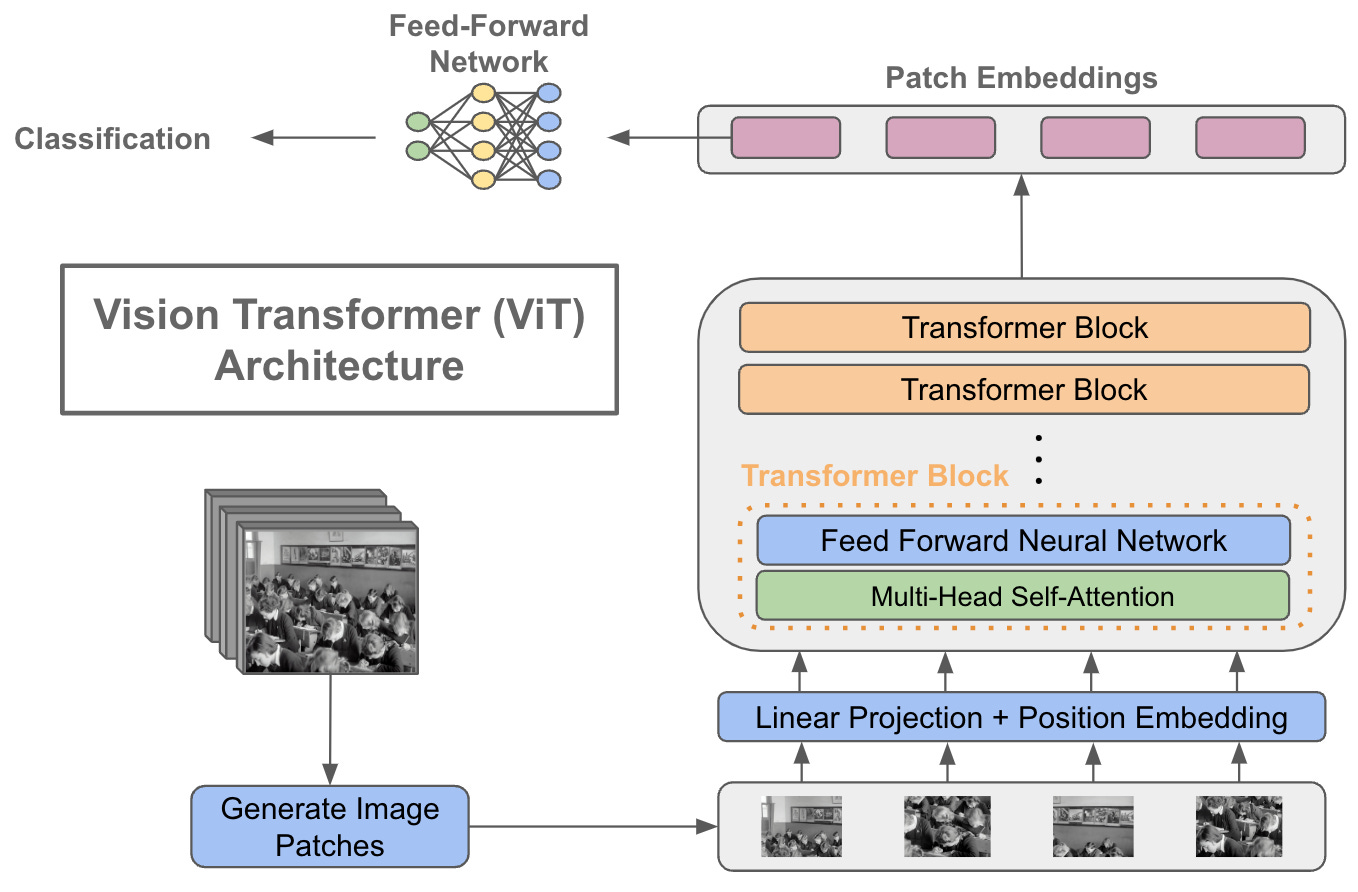

AK on Twitter: "Attention Mechanisms in Computer Vision: A Survey abs: https://t.co/ZLUe3ooPTG github: https://t.co/ciU6IAumqq https://t.co/ZMFHtnqkrF" / Twitter

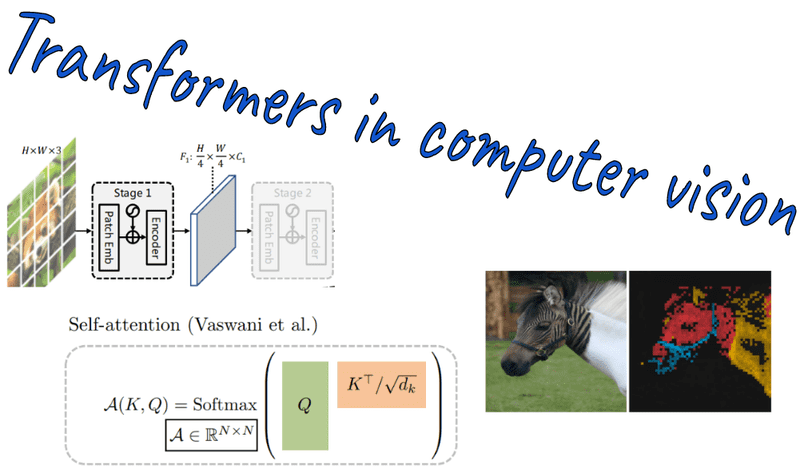

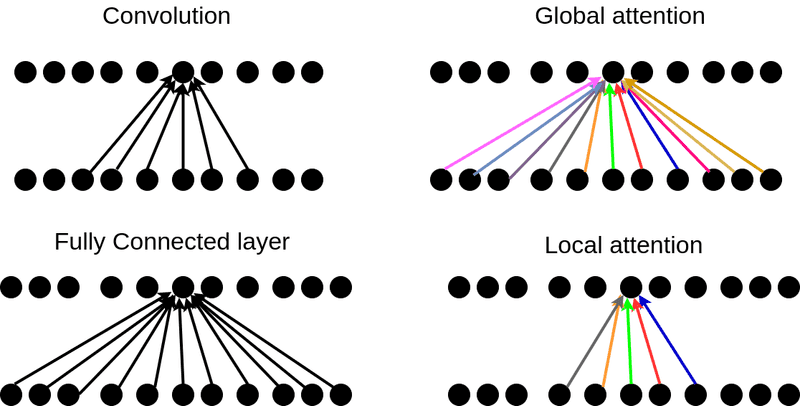

Chaitanya K. Joshi on Twitter: "Exciting paper by Martin Jaggi's team (EPFL) on Self-attention/Transformers applied to Computer Vision: "A self- attention layer can perform convolution and often learns to do so in practice."

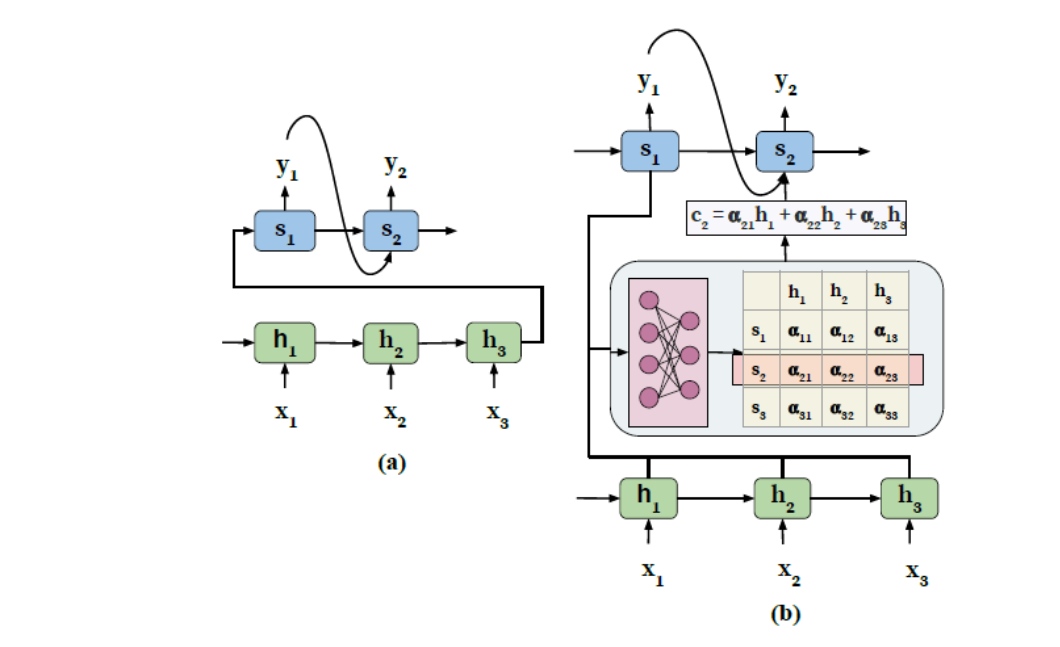

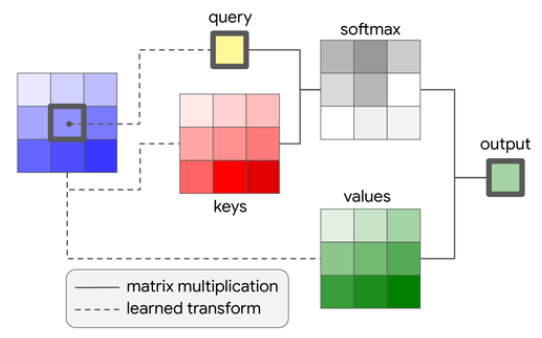

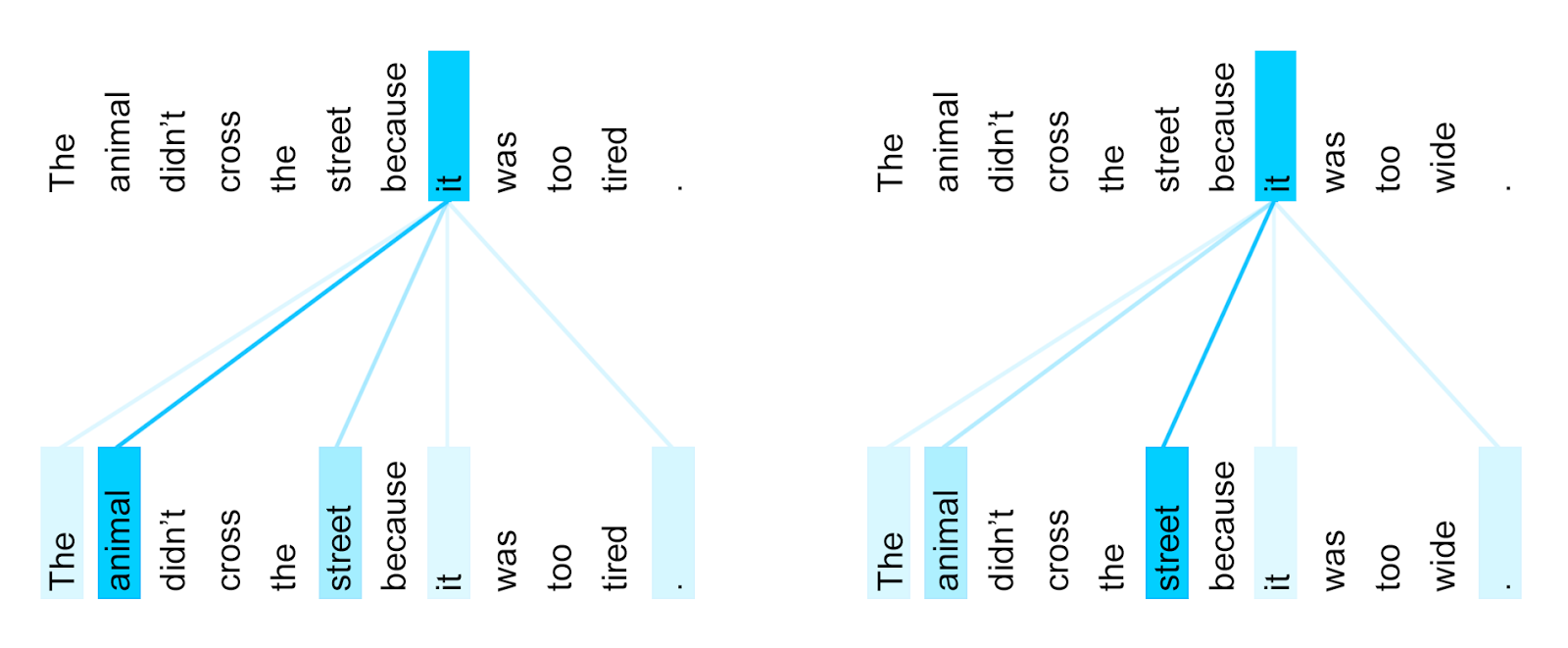

How Attention works in Deep Learning: understanding the attention mechanism in sequence models | AI Summer

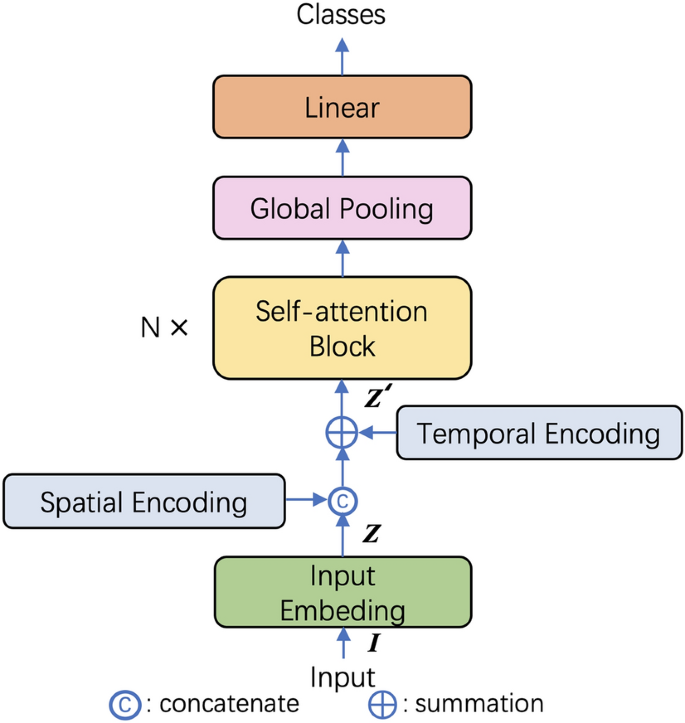

New Study Suggests Self-Attention Layers Could Replace Convolutional Layers on Vision Tasks | Synced

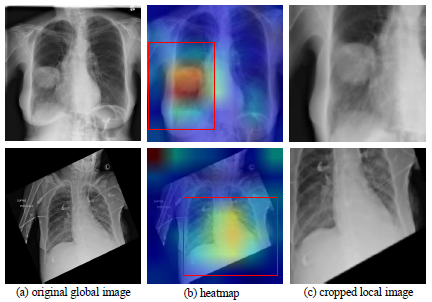

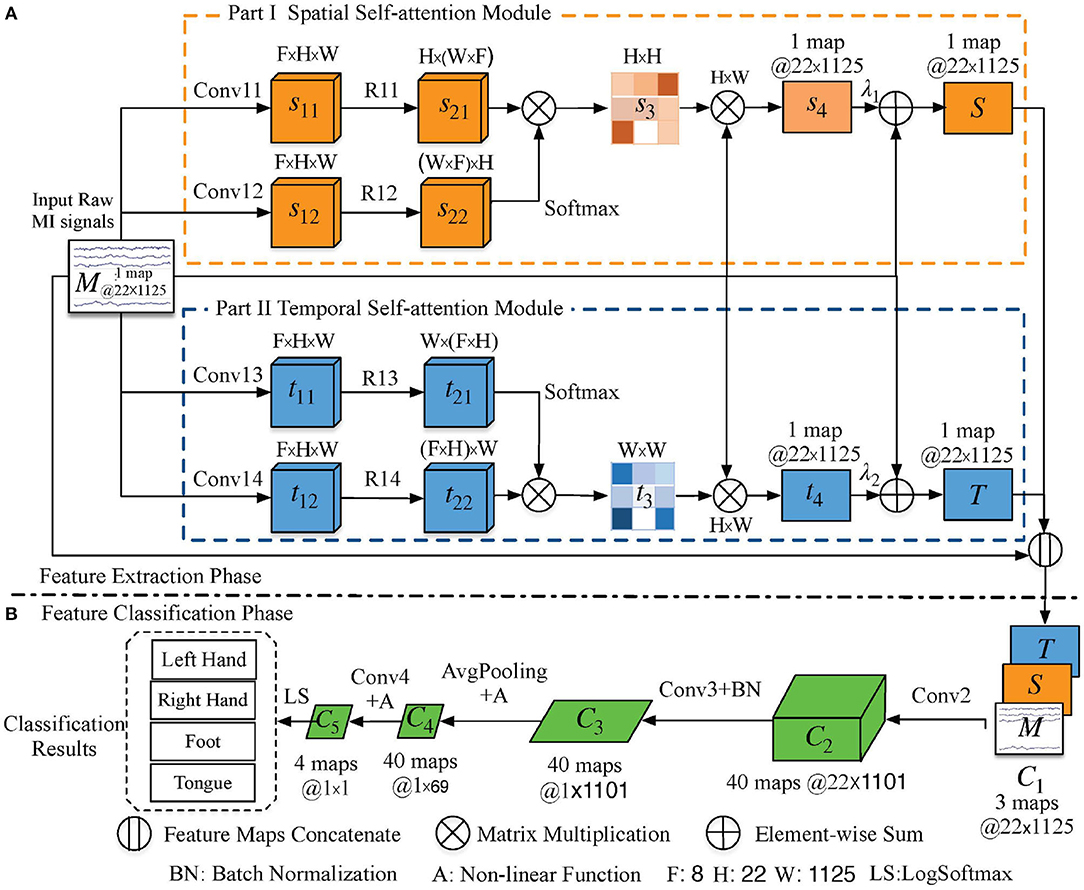

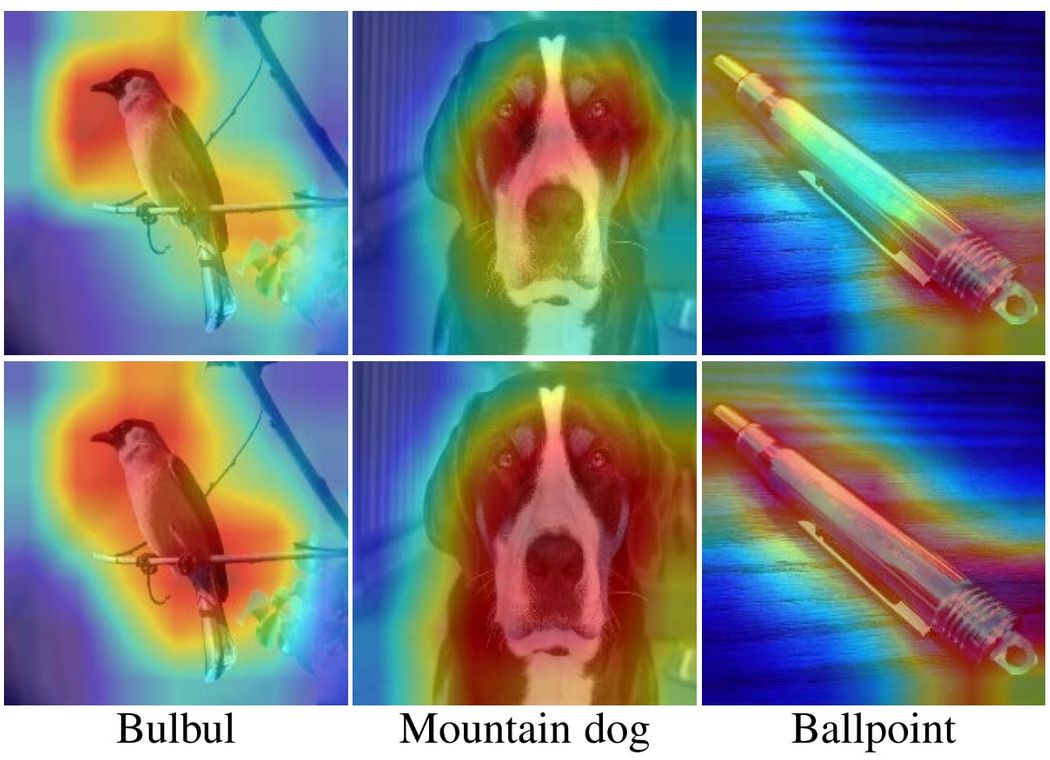

Spatial self-attention network with self-attention distillation for fine-grained image recognition - ScienceDirect